Abstract

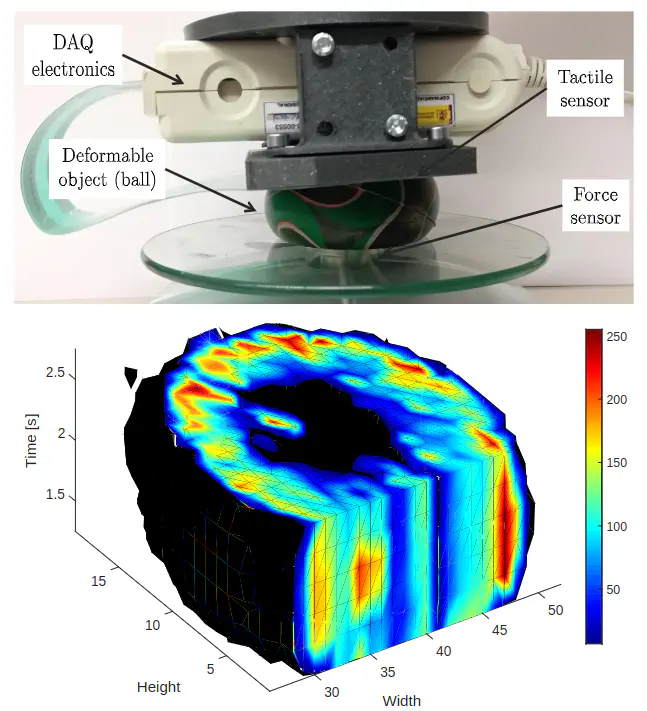

In this paper, a new concept of active tactile perception based on deep learning is presented. A tactile sensor is used to acquire sequences of tactile images of deformable objects when different forces are applied. Hence, the sequence of data can be represented by 3D tactile tensors in a similar way to the sequences of images represented in Magnetic Resonance Imaging (MRI). However, in this case, each 2D frame represents the pressure distribution when a certain force is applied, and the third dimension represents time or the variation of the applied force. Due to this feature of data, a 3D Convolutional Neural Network (3D CNN) called TactNet3D has been created to classify tactile information from 9 deformable objects. A dataset composed of 540 tactile sequences formed by [28×50×10] tactile tensors is used to train, validate and test the performance of TactNet3D, showing that it can classify deformable objects with an accuracy of 96.39% with time series of pressure distributions.

Type

Publication

In IEEE World Haptics Conference (WHC) 2019