Multimodal haptic object recognition: Can kinesthetic inference compensate for the lack of tactile sensing resolution?

Abstract

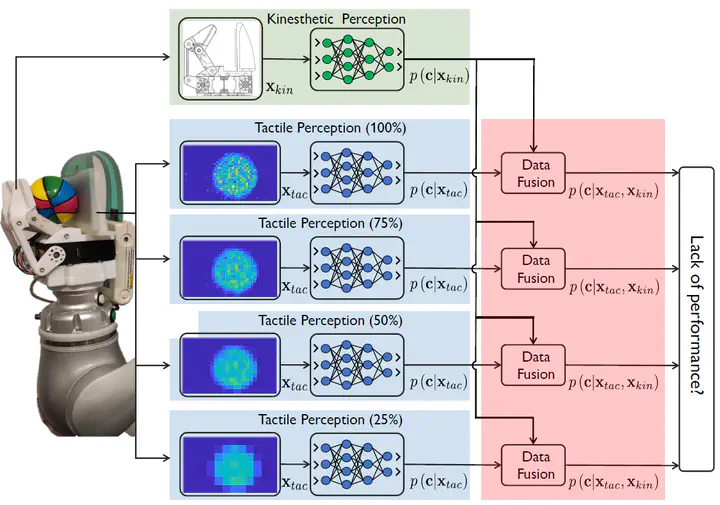

Haptic perception arises from the integration of cutaneous and kinesthetic cues, yet achieving human‑level tactile performance in robotic systems remains technically demanding and economically costly. This raises a fundamental question. How much does spatial resolution truly matter for robotic touch, and can kinesthetic inference compensate when tactile resolution is limited? To investigate this, we conduct a study on haptic object recognition using an underactuated, sensorized gripper equipped with a high‑resolution tactile array and joint angle sensors. We evaluate a dataset of 36 objects collected under a squeeze-and-release exploratory procedure (EP). Tactile sequences are downsampled to four resolutions (100\%, 75\%, 50\%, and 25\%) using bicubic interpolation, and ConvLSTM-based models are trained to quantify accuracy degradation as spatial detail decreases. We then use two fusion strategies from our previous work. Bayesian inference and neural-based inference, which combine tactile and kinesthetic modalities, and assess whether kinesthetic cues can offset the loss of tactile precision. The results quantify the performance drop as tactile resolution decreases and uncover promising evidence that multimodal fusion can partially recover this lost capability. Interestingly, the two fusion methods exhibit distinct behaviors in terms of robustness and consistency. As discussed comprehensively in the manuscript, these findings suggest that, under the right conditions, reliable object recognition may not depend solely on maintaining high tactile resolution, opening new possibilities for designing more scalable and cost‑efficient haptic perception systems.

Type

Publication

In IEEE Sensors Journal